Creating a Dockerized Hugo + AsciiDoctor Toolchain

In the first article of this series we saw how to get Hugo with AsciiDoctor up and running.

With macOS and Homebrew the installation of the needed tools is quit easy.

However, installing ruby gems can be challenging from time to time, especially with both having the system ruby installation of macOS and the Homebrew ruby package in place.

The system installation is outdated and not writable, but still the default the gem tool finds when trying an installation.

You might get through it once, but since I use to work on multiple Macs, things get quite annoying.

And what about Linux, which will be the tooling platform when ist comes to continuos deployment?

And what about Windows?

Both for setup speed and reproducibility manual installations on varying platforms are not acceptable. But for a few years now we have a great alternative for toolchain-in-a-box provisioning. As you might have guessed, we’re talking about:

Docker

When it comes to modern software toolchains, it might be fair to say there is only one (or 1.5) tool(s) we have to take care of: Docker. The other "0.5" tool to increase convenience and reproducibility when working with Docker-based toolchains is Docker Compose.

For those who never touched these tools so far, here’s the elevator pitch: Docker lets us create immutable, Linux-based "Virtual Machine Images" that can be started as totally reproducible and super-lightweight "Virtual Machines".

More precisely though, a Linux filesystem containing applications and supporting stuff (minus the Kernel) can be canned in a layered image, based on which a fenced Process will be started on a Linux Host system. The fencing includes process isolation, cpu, memory, networking and more. From within the fence, it feels like virtualization. But in the end, it’s just a native process running isolated on the Linux host.

Docker obviously runs natively on Linux, but Docker Desktop for Mac and Docker Desktop for Windows both manage to give you a next-to-native experince on macOS and Windows.

Creating a Hugo+AsciiDoc Toolchain Image

The basic idea now is to utilize a self-contained toolchain based on Linux, canned in a Docker image. Looking around at Docker Hub - probably the most popular Docker image repository (or registry, as it is called in the Docker world) - you will find a lot of images providing something similar to what we need. Bryan Klein wrote a nice blog post describing his Docker-based Hugo toolchain along with a sample project, which we could use as well.

On the other hand, why not create a tailored image on our own? We (hopefully) know exactly what we want, so let’s create our tooling image ourselves.

I chose to use Ubuntu 18.04 as base image. Image size is not our primary concern for toolchains, so there is no need to use Alpine or Debian Slim.

Since Docker images are immutable, we are not limited to the distribution package management for chosing what and how to install - the main benefit of the managed packages is the maintainability over time in a mutable system. But instead of upgrading a running system, with Docker we always create a new system with upgrades included. As such we are free to combine whatever works best after image creating. For that reason I chose to install Hugo directly as a precompiled static binary from the official release page. AsciiDoctor and its tool are fetched as Ruby gems.

The default process to be started is hugo serve, which is what we want to use during development time.

Here is the resulting Dockerfile. The full project can be found on GitHub.

FROM ubuntu:18.04

ARG HUGO_VERSION=0.55.1

ENV DOCUMENT_DIR=/hugo-project

RUN apt-get update && apt-get upgrade -y \

&& DEBIAN_FRONTEND=noninteractive apt-get install -y --no-install-recommends \

ruby ruby-dev make cmake build-essential bison flex \

&& apt-get clean \

&& rm -rf /var/lib/apt/lists/* \

&& rm -rf /tmp/*

RUN gem install --no-document asciidoctor asciidoctor-revealjs \

rouge asciidoctor-confluence asciidoctor-diagram coderay pygments.rb

ADD https://github.com/gohugoio/hugo/releases/download/v${HUGO_VERSION}/hugo_${HUGO_VERSION}_Linux-64bit.tar.gz /tmp/hugo.tgz

RUN cd /usr/local/bin && tar -xzf /tmp/hugo.tgz && rm /tmp/hugo.tgz

RUN mkdir ${DOCUMENT_DIR}

WORKDIR ${DOCUMENT_DIR}

VOLUME ${DOCUMENT_DIR}

CMD ["hugo","server","--bind","0.0.0.0"]With Docker installed, the image can be built from the command line. Since I want to publish it to Docker Hub later, I create a tag that matches my Docker Hub space.

docker build -t rgielen/hugo-ubuntu .Running the Image with Docker

Now from within a Hugo project, we can start a Docker container serving the project, based on the said image. The image was pushed to Docker Hub already, such that the following command works even when the build step was skipped:

docker run --rm -p 1313:1313 -v $PWD:/hugo-project rgielen/hugo-ubuntu:latestThis runs the hugo serve command from within the container, on top of our working directory which gets mounted into the container as /hugo-project.

The container server port 1313 is mapped to the host.

Given that, the result of this call is equivalent to running hugo serve locally.

You can open a browser at http://localhost:1313, and it will behave exactly as with a locally running Hugo server, including LiveReload.

Moreover, we can create customized calls to hugo as well, say printing the help text:

docker run --rm -v $PWD:/hugo-project rgielen/hugo-ubuntu:latest hugo --helpGiven that, we can use the image to also create the static html for site distribution:

docker run --rm -v $PWD:/hugo-project rgielen/hugo-ubuntu:latest hugoAfter running this command, the static files can be found locally in the public directory.

Docker Compose

With Docker Compose locally running containers can be orchestrated.

A docker-compose.yml is basically Infrastructure as Code, describing container-based services, networks between them and persistent storage (and more).

But Docker Compose can also be used to capture configuration that otherwise has to be provided again and again on the command line.

As we saw before, our command lines to start Hugo within the container were not exactly short and elegant. So let’s create the following docker-compose.yml file in our project:

version: '3.5'

services:

hugo:

image: rgielen/hugo-ubuntu:latest

ports:

- 1313:1313

volumes:

- ${PWD}:/hugo-projectOnce provisioned on a system with Docker Compose installed, starting the Hugo server boils down to

docker-compose upThe service can also be started in the background:

docker-compose up -dThe Docker Compose setup also lets us call custom Hugo commands. To display the help text as in the previous example, issue

docker-compose run hugo hugo --helpThe first hugo is the service name in the docker-compose.yml file.

This is followed by the actual command call hugo --help.

Bonus: Jenkins

Maintaining the toolchain image over time should not be a manual task. When deploying to Docker Hub, you can also chose to create a build setup there which connects a GitHub or BitBucket repository with the Docker Hub build infrastructure. Once you push to your Git repository then, the new Docker image gets built and published automatically.

Unfortunately, turn-around time is not exactly blazingly fast. If you have a Jenkins installation at hand, it might be a better choice to create your own build and publish job there.

Let’s provide a simple Jenkinsfile, based on which a Jenkins Pipeline Job can be configured.

The pipeline uses the scripted pipeline approach and defines three stages to check out code, build the image and (on success) publish it to Docker Hub.

#!groovy

node {

def dockerImage = null

stage('Checkout') {

checkout scm

}

stage('Build') {

dockerImage = docker.build("rgielen/hugo-ubuntu")

}

stage('Push') {

docker.withRegistry('', 'hub.docker.com-rgielen') { (1)

dockerImage.push()

}

}

}| 1 | A Docker Hub login is needed to push the image. Within Jenkins, a matching named Username-Password Credential has to be created. In my Jenkins configuration, the credential id is hub.docker.com-rgielen |

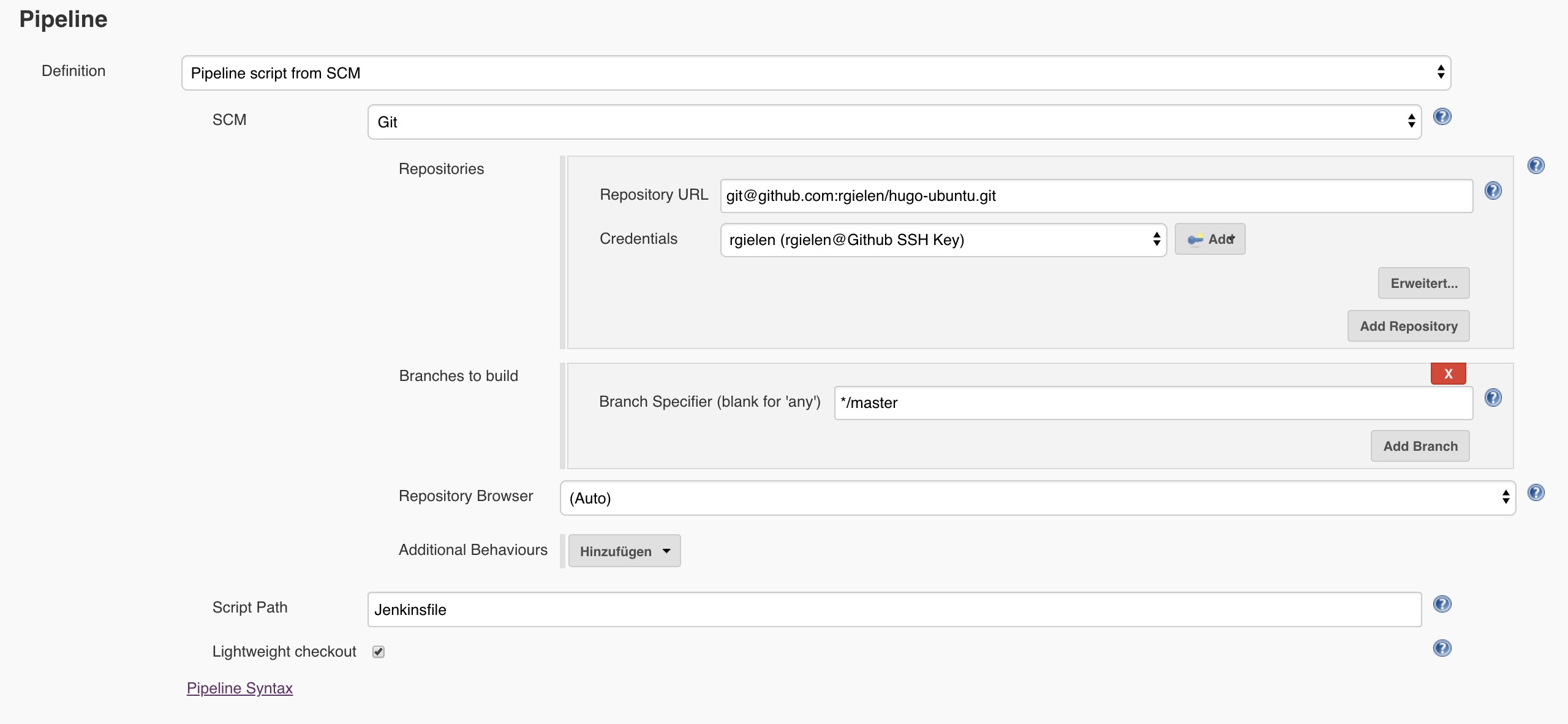

The following image shows the core config for the Jenkins pipeline job.

Ideally a GitHub webhook is provided to trigger the pipeline job, or SCM polling is activated. And of course there is more that can be done about versioning and tagging, or even pull request handling. But this might be a nice topic for a later post.